There’s an intense moment when you stand up your first agentic dev crew and theory becomes reality. The approach involves sound architecture, clearly defined agentic “team members” (org structure and all), and substantive workflows. Unfortunately, all too often an agentic team does the heavy lifting to build a page that…well…looks like it was designed by someone who’s never seen the client’s brand.

We know because it’s a common refrain of the past couple of years, with countless stories in our industry of website projects gone bad. To be fair, we’ve encountered a few ourselves as we’ve quickly scaled agentic teams. Every major advancement involves a few bumps and bruises.

Our CTO, Joe Warner, laid a strategic vision for the AIMCLEAR Dev shop: stop treating AI like a single monolithic brain and start treating it like a team of advanced specialists, each with a defined role, a clear scope, and the tools to stay in their lane. That thinking reshaped how our entire department operates. But vision doesn’t ship code. Developers do. And developers working alongside 30 to 40 agentic team members across live client projects encounter problems that no whiteboard session fully anticipates.

This post is about one of those problems, noted above. We’ll share how solving it keeps refining the way we think about agentic development entirely.

The Scale Problem Nobody Talks About

AIMCLEAR currently runs approximately 30 to 40 agentic developers alongside a human dev team of four. With this transformative approach, those agents are doing the equivalent work of 40 to 50 human developers, running in parallel across real client engagements, writing an estimated 75 to 80 percent of the production code that ships.

When our leadership team first articulated this trajectory, the logic was clear: agentic crews eliminate the bottleneck of sequential development. Rather than one developer working through a queue, multiple agents execute simultaneously. Think of a front-end agent building components while a QA agent tests, a compliance agent audits, and a performance agent flags layout shift risks. Parallel cognition, distributed across purpose-built roles.

What that framing doesn’t prepare you for is what happens when all of those agents are making independent decisions about how something should look.

When AI Agents Freelance A Client’s Brand

Large language models have an element of creativity. It can be their strength when you’re generating ideas or solving novel problems. But it becomes a liability when you need pixel-level consistency across a web application. Left to their own instincts, agentic developers will produce output that looks generically competent, clean enough to pass a glance test, but disconnected from actual brand identity.

We recognized this pattern almost immediately. Buttons that were 30 pixels wide on one page and 40 on the next. Color values that drifted between hex codes that were close but not correct. Typography that defaulted to whatever the model’s training data suggested was “professional.” Unchecked, the output would be almost right, meaning it could slip through if our human team wasn’t paying close attention.

Fundamentally, this is yet another “AI slop” problem, and it’s one you can’t simply prompt away.

The Fix Was Already Sitting in Our Asset Library

Here’s where this story takes a turn that any dev lead or CTO will appreciate: the solution wasn’t some novel AI technique. It was in a PDF.

For over a decade, every competent dev shop has operated from brand style guides. When a designer hands off to a developer, the style guide is the contract detailing hex codes, font stacks, spacing values, component behavior, interaction patterns. Typically, devs are not tasked with improvising, because by nature we execute against the guide. It’s a practice so foundational that even the most experienced teams can take it for granted.

Turns out, the same asset that kept human developers on-brand for the last ten years works exceptionally well for AI agents. The same PDF. The same level of specificity. The difference is in how deeply you can push it.

From Passive Reference to Active Constraint

A style guide sitting in a project folder is a passive document. A human developer might check it occasionally. An agentic developer will ignore it entirely unless you make it part of the operating environment.

Our pipeline for activating a style guide in an agentic workflow works like this:

Our designers build the visual system in Figma. Using Figma’s MCP (Model Context Protocol) tools, we can hand off design tokens and component specs directly to the LLM in a format it can parse. From there, we tap Claude Code to generate a comprehensive markdown document that captures the full style system, including colors, typography scales, spacing rules, component specifications, interaction patterns, everything.

That markdown file then gets linked inside the project’s CLAUDE.md, the configuration file that Claude Code reads before nearly every action it takes. Think of it as the agent’s standing orders. When the style guide lives inside CLAUDE.md, it stops being a reference document and becomes an active constraint. The agent doesn’t have to remember to check the guide. The guide is already loaded into its operating context.

This is the critical shift: the style guide becomes infrastructure, as opposed to mere documentation. The deeper the specifics, the more powerful it is to feed the agentic team.

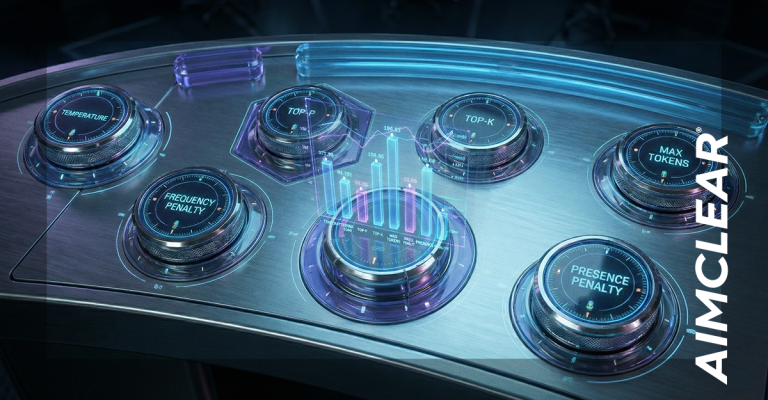

The Enforcement Stack

Linking a style guide into CLAUDE.md gets you most of the way there. But “most of the way” in production is another way of saying “still needs human intervention.” So we layer enforcement mechanisms on top.

Hooks are the first line of defense. Claude Code supports event-based triggers: pre-tool-use, post-tool-use, and completion hooks that fire at specific moments in the agent’s workflow. These are viewed as hard stops enforced at the software level. When a streaming API response finishes, the hook fires. When the agent thinks it’s done with a task, the hook fires. This is fundamentally more reliable than prompt-level instructions, which are, to borrow a phrase from our team, “too wishy-washy” for production work.

Visual regression testing is the second layer. Our stack, Node.js-based with hot module replacement, gives us real-time feedback when the agent’s output drifts from the design spec. When we see drift in the browser, we point the agent back to the reference document. Buttons should be 30 pixels, not 40. Here’s the source of truth. Fix it.

Pre-commit checks via tools like Husky catch issues before code ever reaches the repository. Schema validation, branching rules, and linting all run automatically. The agent’s code passes through the same gates as human-written code.

And then there’s a technique we’ve found disproportionately effective: the agent reviews its own work visually. Claude Code has a browsing capability that lets it open a Chrome instance, navigate to the page it just built, take a screenshot, analyze its own output against the style guide, and self-correct. We used this extensively during one of our larger client builds and the speed gains were significant. The agent catches its own mistakes before we ever see them.

The Elephant Problem (And Why Positive Reinforcement Wins)

One behavioral insight that surprised us along this journey: telling an AI agent not to do something is often counterproductive. If you instruct an agent “don’t use the Airbnb style guide,” you’ve just introduced the Airbnb style guide into the agent’s context. It’s the classic “don’t think about an elephant” paradox, and it’s very real with LLMs. It may seem counterintuitive, but anyone who’s told GPT “don’t use em-dashes” knows that’s just BEGGING ChatGPT to use more em-dashes!

What works instead is positive reinforcement through pattern matching. We tell the agent what to follow. “Follow the pattern used on the dashboard page” is dramatically more effective than “don’t use default styling.” The agent latches onto the positive example and replicates it. Over time, as the project builds momentum, the agent’s context fills with consistent, on-brand examples of its own prior work, and it starts making correct assumptions without being told.

This is an underappreciated dynamic of agentic development: the codebase itself becomes the training set. The more consistent work the agent produces early on, the less guidance it needs later.

The Role Nobody Trained Us For

This is the part of the story that goes beyond tooling and into what it actually feels like to work this way.

When the style guide pipeline is working (when CLAUDE.md is properly configured, hooks are enforcing standards, and the agent is self-correcting against visual output) the developer’s job changes fundamentally. You edit code versus writing it.

That sounds like a minor distinction. It isn’t. Writing code means you’re in the logic, line by line, making decisions about implementation at every step. Editing code means you’re operating at a much higher altitude, reviewing architectural decisions, catching logic errors the agent missed, optimizing performance, and ensuring the overall system hangs together. Your time shifts from construction to quality assurance, from execution to human judgment.

Joe W. calls this moving from “doer” to “overseer,” and having lived it for the past several months, the description is accurate. It’s the same cognitive shift that happens when a senior developer starts managing a team of juniors. We now find ourselves writing less code because our leverage, and our greater value, is in a different place.

For us, this meant leveling up in ways we didn’t fully expect. When you have agentic team members handling SEO analysis, accessibility auditing, QA testing, and security review alongside you, it’s much more like you’re leading a team of specialists. Each of us, individually, operates with the combined expertise of a small engineering department. That’s the multiplier effect that completely redefined the breadth and quality we can enforce on every project.

The Foundation Matters More Than the Framework

If there’s one thing we’d tell any dev team preparing to go agentic at scale, it’s this: your foundation determines your ceiling.

Think about it in the same way a baker approaches baking a cake. We need the right ingredients. The initial setup involves your tech stack, architecture decisions, style system, and configuration files (mixing the batter). It requires hands-on work, deep knowledge of your ingredients, and deliberate choices. You need CSS variables that let you change a font color site-wide by editing a single variable definition instead of running a global search-and-replace.

Once that foundation is solid (the cake is baked), the subsequent work is frosting and sprinkles. The agents learn your preferences. They reference prior patterns. Small updates propagate cleanly because the underlying system was designed for it. The teams that skip the foundation work, that try to go fast before they go right, end up with agents building on sand. And agents building on sand produce exactly the kind of inconsistent, fragile output that gives agentic development a bad reputation.

Start right. Go deep on the setup. The speed comes after.

What This Means for the Work

We should be direct about what this capability translates to for the organizations we serve.

AIMCLEAR’s agentic dev operation means that clients who previously couldn’t afford the depth of development they needed, now have access to it. This includes thorough, multi-disciplinary engineering that takes into account SEO, accessibility, security, performance, and brand consistency simultaneously. A four-person dev team producing at the scale of a 40-to-50-person shop is now an operating reality. It means faster turnarounds, higher baseline quality, and the kind of attention to detail that was historically reserved for enterprises with engineering budgets to match.

Every week, our agents get smarter because our codebases get more consistent. Every project, the foundation work gets faster because we’ve built the patterns before. The flywheel is turning.

Where This Goes Next

We’re on the cusp of fully standardized integration of agentic crews across AIMCLEAR’s entire development operation (measured in weeks – not months or years). The style guide pipeline we’ve described here is one pattern. There are others, such as compliance enforcement, accessibility auditing, performance optimization. Each has its own seeding strategy, its own enforcement stack, its own lessons learned.

The teams and organizations that figure this out early will code smarter, with built-in quality controls that compound over time. The style guide work in the example above simply shows that giving agentic team members better constraints up front pays off with tangible improvements. The best agentic teams will be those with the most disciplined architecture around those models.

We intend to keep building that architecture. And we intend to keep sharing what we learn.

Tim Eastvold and Jack Skaret are developers at AIMCLEAR, where they manage agentic development crews across client projects. AIMCLEAR is a digitally integrated marketing agency built on a foundation of engineering excellence.